Final Solution (Part 1)

HI folks. This week's posts are gonna be our last ones and expose our final solution. On this post we are going to expose our data center solution hence giving closure to this 4 months of posts. When we were given the task to take design decisions on the construction of a data center for a banking entity we were also fiven a list of vendors we had to work with:

- Network: HPE

- Servers & storage: HPE

- Security:

Hence, our solution is a fusion of technologies some standard and other vendor specific. Although as it will be evidenced along this two last posts we've worked to merge all those technologies in order to search for synergies between them that allow for a more robust, performant and integrated solution. SERVERS & STORAGE Regarding servers and storage our choice working with HPE was pretty easy since they had a very flexible, powerful and integrated solution for both server and storage that allowed to hugely cover the needs of our datacenter. Moreover, we found a success case for that device from a banking entity which reassured us on our choice. The device in question is the HP Superdome X, a mission critical oriented blade server that was ideal for our purposes.  This device which offers a blade based integrated solution for computing and storage, eliminates the need of having a separated network infrastructure for the storage than for the computing resources. Also, the Superdome provide purpose-built compute optimized for the highest availability, scalability, and efficiency, and is ideal for the most demanding and critical business processing and decision support enterprise workloads, which is the case of bank institutions. Superdome X maximizes the uptime of critical applications with up to 20x greater reliability than platforms relying on soft partitions alone and 60% less downtime than other x86 platforms. Up to 16 sockets and 48TB of memory can handle all traditional and in-memory databases and large scale-up x86 workloads while offering less Total Cost of Ownership (TCO) than other solutions. Moreover, Superdome X can isolate critical applications from other application failures with the HP unique x86 hard partitioning (HPE nPars). HPE 3PAR Remote Copy replications is compatible with VMware vVols which guarantees a granular protection of the virtual machines.

This device which offers a blade based integrated solution for computing and storage, eliminates the need of having a separated network infrastructure for the storage than for the computing resources. Also, the Superdome provide purpose-built compute optimized for the highest availability, scalability, and efficiency, and is ideal for the most demanding and critical business processing and decision support enterprise workloads, which is the case of bank institutions. Superdome X maximizes the uptime of critical applications with up to 20x greater reliability than platforms relying on soft partitions alone and 60% less downtime than other x86 platforms. Up to 16 sockets and 48TB of memory can handle all traditional and in-memory databases and large scale-up x86 workloads while offering less Total Cost of Ownership (TCO) than other solutions. Moreover, Superdome X can isolate critical applications from other application failures with the HP unique x86 hard partitioning (HPE nPars). HPE 3PAR Remote Copy replications is compatible with VMware vVols which guarantees a granular protection of the virtual machines.  To learn more about the solutions provided with these blade servers and its technical specifications, click here and here. It is important to denote that although the storage devices are integrated the communication protocol used depends on the storage blade that it is installed but it will mainly be FC, FCOE, iSCSI or Gig. Ethernet. Since on top of that we will be using vVols the actual transport is just an enabler for the actual storage solution. SERVICES With the above stated devices we have a great hardware to hold all our applications and services but we need both a virtualization environment that helps configure, orchestrate & manage it and a hypervisor technology that serves as a base layer for virtualization. With that goal we'll use VMWare hypervisor and virtualization solution VSphere & ESxi which will allow us to virtualize the actual instances running on our servers and with all its virtualization related technologies it can be integrated with both the HPE network and services virtualization technologies and as will be seen hereinafter with the security and QoS solutions that we will work with. VMWare is a strong vendor on the virtualization solutions ecosystem which gives a lot of guarantees and also makes it more likely that there will be integrations with other hardware vendors that allows us to use the best of each in an integrated manner. All this virtualization ecosystem built with VMWare will be deployed under the HPE DCN which provides large scale network virtualization, including federation, across multiple data centers. Virtual networks can be configured and consumed through HPE Helion OpenStack or HPE Matrix Operating Environment (MOE) or Cloud Service Automation (CSA). This acts as a broker for cloud services providing information which is also sent to HPE Virtualized Services Directory (VSD), which in turn provides a centralized orchestration and policy management framework. These policies are passed to HPE Virtualized Services Controller (VSC) for dynamic deployment seamlessly within and across data centers. To dynamically create VXLAN tunnels, the HPE VSC uses OVSDB to HPE hardware VTEPs and OpenFlow to software VTEPs with HPE Virtual Routing and Switching (VRS) agents residing in the hypervisors. The solution supports OpenSource KVM, VMware ESXi, Xen and Hyper-V so we are completely covered. This infrastructure is the giant of the three proposed. With its three layers it completely abstracts the services running on the infrastructure and automatically manages them between all available resources including even those in different data centers.

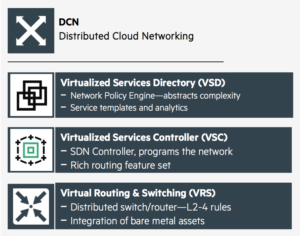

To learn more about the solutions provided with these blade servers and its technical specifications, click here and here. It is important to denote that although the storage devices are integrated the communication protocol used depends on the storage blade that it is installed but it will mainly be FC, FCOE, iSCSI or Gig. Ethernet. Since on top of that we will be using vVols the actual transport is just an enabler for the actual storage solution. SERVICES With the above stated devices we have a great hardware to hold all our applications and services but we need both a virtualization environment that helps configure, orchestrate & manage it and a hypervisor technology that serves as a base layer for virtualization. With that goal we'll use VMWare hypervisor and virtualization solution VSphere & ESxi which will allow us to virtualize the actual instances running on our servers and with all its virtualization related technologies it can be integrated with both the HPE network and services virtualization technologies and as will be seen hereinafter with the security and QoS solutions that we will work with. VMWare is a strong vendor on the virtualization solutions ecosystem which gives a lot of guarantees and also makes it more likely that there will be integrations with other hardware vendors that allows us to use the best of each in an integrated manner. All this virtualization ecosystem built with VMWare will be deployed under the HPE DCN which provides large scale network virtualization, including federation, across multiple data centers. Virtual networks can be configured and consumed through HPE Helion OpenStack or HPE Matrix Operating Environment (MOE) or Cloud Service Automation (CSA). This acts as a broker for cloud services providing information which is also sent to HPE Virtualized Services Directory (VSD), which in turn provides a centralized orchestration and policy management framework. These policies are passed to HPE Virtualized Services Controller (VSC) for dynamic deployment seamlessly within and across data centers. To dynamically create VXLAN tunnels, the HPE VSC uses OVSDB to HPE hardware VTEPs and OpenFlow to software VTEPs with HPE Virtual Routing and Switching (VRS) agents residing in the hypervisors. The solution supports OpenSource KVM, VMware ESXi, Xen and Hyper-V so we are completely covered. This infrastructure is the giant of the three proposed. With its three layers it completely abstracts the services running on the infrastructure and automatically manages them between all available resources including even those in different data centers.  Since we are working in the baking environment which has a lot of performance requirements and with several data centers, we are going with the third proposed solution, HPE Distributed Cloud Networking. Through its use we can first of all integrate all the bare metal assets and completely interconnect them, including those geographically distant, hence compacting all the computing power and getting a best performance of those. Then through the VSCs automated management of the network and services in a lower level is done therefore interconnecting the bare metal with the abstracted services which are managed centrally for all the system on the VSD. This solution allows to consolidate the computational resources and reduce the amount of physical equipment required to achieve the goals the infrastructure is meant to satisfy. Also through the use of the VSD and VSC and HPs specific protocols and technologies, the services can be moved between data centers although all the geographic locations can be thought as one big flat infrastructure. SECURITY Regarding Security, since our data center is virtualised and SDN-controlled thanks to HP DCN Solution, it was not so much about which device we would use but which solutions we could integrate with our existent virtualized envirionemnt. Hence we mainly made the security segment with FortiGate-VMX, which is Fortinet’s virtualised Next Generation Firewall (NGFW).

Since we are working in the baking environment which has a lot of performance requirements and with several data centers, we are going with the third proposed solution, HPE Distributed Cloud Networking. Through its use we can first of all integrate all the bare metal assets and completely interconnect them, including those geographically distant, hence compacting all the computing power and getting a best performance of those. Then through the VSCs automated management of the network and services in a lower level is done therefore interconnecting the bare metal with the abstracted services which are managed centrally for all the system on the VSD. This solution allows to consolidate the computational resources and reduce the amount of physical equipment required to achieve the goals the infrastructure is meant to satisfy. Also through the use of the VSD and VSC and HPs specific protocols and technologies, the services can be moved between data centers although all the geographic locations can be thought as one big flat infrastructure. SECURITY Regarding Security, since our data center is virtualised and SDN-controlled thanks to HP DCN Solution, it was not so much about which device we would use but which solutions we could integrate with our existent virtualized envirionemnt. Hence we mainly made the security segment with FortiGate-VMX, which is Fortinet’s virtualised Next Generation Firewall (NGFW).

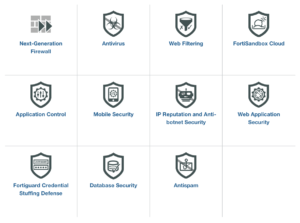

But FortiGate-VMX is not only a NGFW, since it can comprise other services from FortiGuard Security Services. FortiGuard is a wide range of security functions which can be deployed with its equipment. Those who can be implemented with FortiGate-VMX are NGFW, Antivirus, Web Filtering, FortiSanbox Cloud (IDS for the cloud), Application Control, Mobile Security, IP Reputation and Anti-botnet Security.

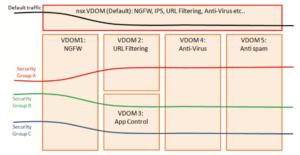

FortiGate-VMX also disposes of Fortinet’s Virtual Domain technology. By defining separate Virtual Domains in combination with VMWare’s security groups, segmentation of security functions and enablement of multi-tenancy is enabled. Mapping NSX Service Profiles to Fortinet VDOMs segregates policies to be enforced for specific traffic flows. This model reduces the added complexity of registering a specific security solution for each tenant hosted in the environment. Furthermore, any additions or other changes to these Security Groups in the NSX Manager will be automatically associated with the proper FortiGate-VMX security policy without requiring any manual changes in the FortiGate-VMX Service Manager. Not only changes, but new VM workloads are automatically associated to their proper security policy in real-time upon creation, avoiding lag-time or human error caused by manual intervention.

QoS Regarding the QoS setup, is not always the best solution to actually guarantee the QoS requirements of the network. In the concrete case of a banking related data center we need to apply some specific QoS techniques in order to lower the latencies of sensitive traffic as much as possible although those will be very specific applications. In a general sense we'll mostly focus on guaranteeing that there is no congestion on the network and that the throughput at all its points is the required for the actual demand and QoS requirements. Hereby, we'll oversize the bandwidth on the critical points to make it harder for elephant traffic generated delay to even happen. Also congestion detection mechanism and most of all ECN Marking will be applied. The last one allows to detect congestion and reduce transmission rate before packets get dropped by the network hence improving the network performance by preventing retransmissions. As it's been said, by being in a banking environment, it will mostly have some really sensitive applications as stock trading ones which require a special treatment hence requiring the implementation of QoS mechanisms "per se". In those cases, it is important to keep the network configuration as clean as possible. Police ingress traffic would queue packets based on DSCP or 802.11p markings. Application aware processing which is appearing a lot in recent software for network devices would be best implemented on hypervisors (which we have great tools for in VSphere or in the DCE infrastructure) or end hosts and not in the ToR switch.